The default Deductive is good. The Deductive your team has been teaching for two months is the one nobody on your team can imagine working without. There are two modes of teaching. They feed the same downstream pipeline and they compound:Documentation Index

Fetch the complete documentation index at: https://docs.deductive.ai/llms.txt

Use this file to discover all available pages before exploring further.

1. Implicit learning: your team is already doing it

When your team talks about an alert in a Slack thread, replies to Deductive in a chat, or follows up on an investigation, Deductive reads the conversation and picks up signals from it. You don’t have to do anything special. The act of working the problem with teammates is the training data. What gets picked up:- Confirmations. “Yep, this was the deploy”, “good catch”, “agreed, restart fixed it”. Treated as positive signal on the agent’s diagnosis.

- Corrections. “Actually it was the cache eviction storm”, “the metric was wrong, it under-counts cache hits”, “we ruled that out, look at the upstream gateway”. Treated as negative signal on the path the agent took, plus a captured correction.

- Resolutions. “We rolled back, problem solved”, “Atlas is on it, ETA 30 min”. Treated as outcome data. What fixed the issue, who owned it.

- Conventions and gotchas. “Don’t trust the staging error rate, it’s noisy below 0.05”, “ignore

legacy-*namespaces, they’re being deprecated”. Treated as durable team knowledge.

2. Explicit learning: when you want to be deliberate

Every Deductive message (chat reply, alert investigation, canvas draft) has a comment box. Use it when you want to be precise about what worked or what went wrong.

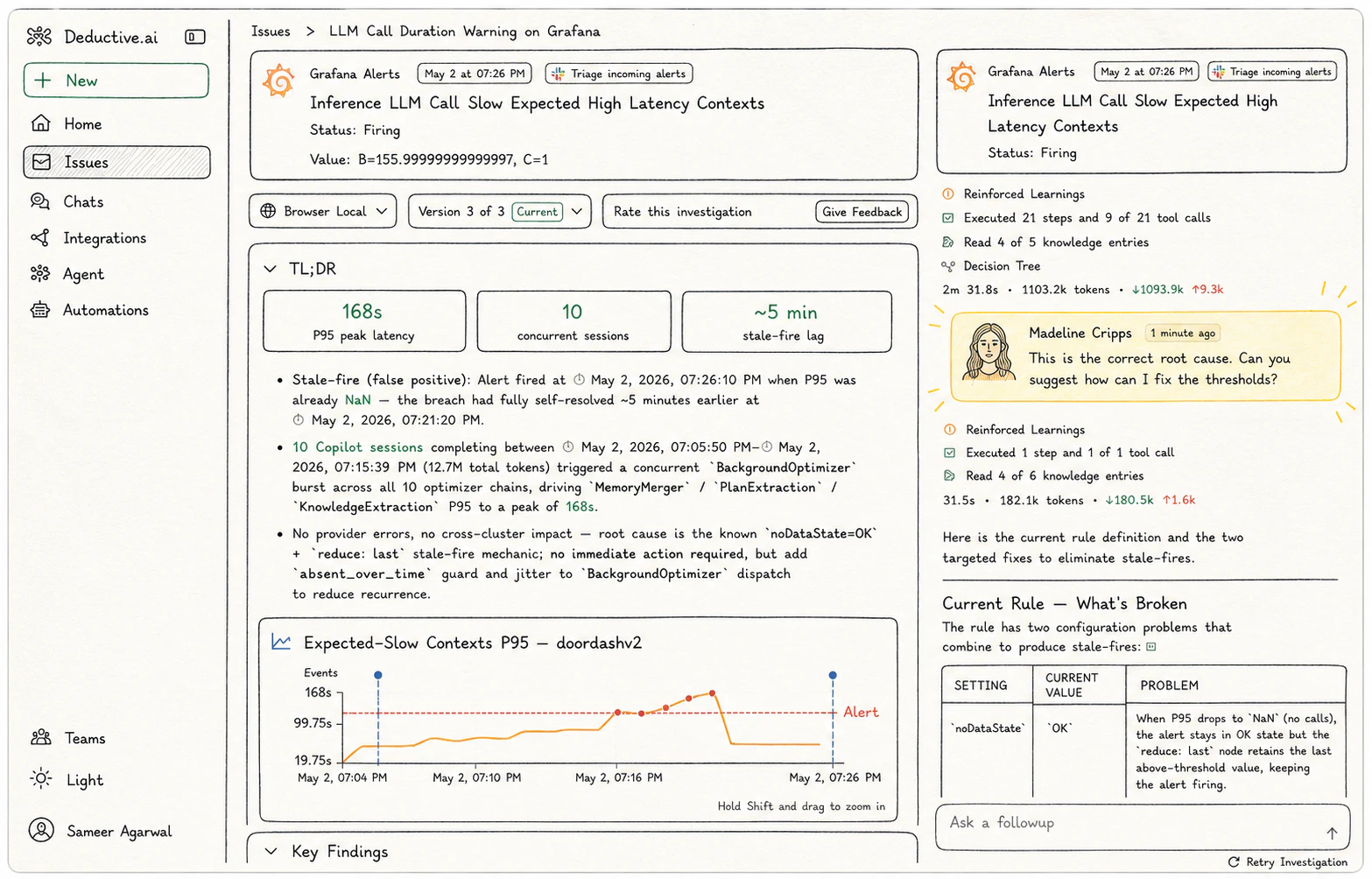

- When the answer is right. A short confirmation is enough. “Good”, “this is the right call”, “spot on”. The decision tree that produced the answer gets reinforced, and the supporting evidence gets distilled into a memory the agent can draw on later.

- When the answer is wrong. Write one line saying what went sideways. “Ruled out the deploy too early”, “missed the parallel job”, “answered confidently with stale data”. The decision-tree branch that took the agent there gets penalized for similar future questions, and the correction itself becomes a memory.

- When the answer is right but the path was wasteful. Say so. “Right answer, wrong tool, should have used

kubectl describeinstead.” Useful for nudging the agent toward better-shaped reasoning even when the conclusion is correct.

What both modes compound into

Whether the signal comes from a Slack thread or an inline comment, it lands in the same place and produces the same two effects:Decision trees get reinforced or penalized

Every signal is recorded alongside the decision tree that produced the answer. Positive signals mark the sequence of steps that led to the correct conclusion as canonical for that class of question: the next time a similar question shows up, Deductive will reuse that exact path. Negative signals mark the missteps as branches to avoid. You can see this happen on the Decision Trees page: each node carries areinforced_count and last_reinforced_at in its metadata.

Memories feed the knowledge graph

Signals also get distilled into memories, short structured records of what your team confirmed or corrected. Memories are the source material that knowledge entries are built from (each entry on the Knowledge Archive has a “X source memories” link back to the threads and comments it came from). When a future investigation touches a similar topic, those memories surface as grounding context.Do this now (3 min)

Two quick wins, one of each mode:- Implicit. Find the most recent alert thread in Slack where Deductive auto-investigated. Reply with one sentence summarizing what actually fixed it (or what the real cause turned out to be). The agent learns from that reply as if you’d written it as feedback.

- Explicit. Open one of yesterday’s investigations in the web app. Find an answer that was right and leave a one-line confirmation. Find one place the agent took an unnecessary step and leave a one-line comment saying exactly what should have happened instead.

The compounding effect

A team that does this naturally (talking to each other in alert threads, leaving the occasional comment when something is clearly wrong) has a meaningfully different agent within a quarter than the team that just uses Deductive out of the box. The mechanism is invisible until you see it: a question you asked last month gets answered faster and better today because of conversations and comments your teammates left in between.Try this next

Use Deductive in Slack

Implicit learning is mostly Slack-driven. The patterns that feed the loop best.

Continuous learning

See where both modes show up: reinforced decision trees and a growing knowledge graph.